Electronics#ar #VR #AR Glasses #Augmented Reality #Virtual Reality #techtok #cftech

Use this section to provide a description of your blog./pages/blog

Open-sourcing AI data of Deepseek in AR glasses

Posted by Technology Co., Ltd Shenzhen Mshilor

Open-sourcing AI data and technologies related to Deepseek in AR glasses can significantly enhance collaboration, innovation, and accessibility within the developer and research communities. Here are some potential aspects and benefits of such an initiative:

Potential Aspects of Open-Sourcing Deepseek AI Data

-

Model and Algorithm Sharing:

- Description: Sharing the underlying machine learning models and algorithms used for object recognition, environmental mapping, and gesture recognition.

- Benefit: Allows developers to build upon existing technology, creating new applications and enhancements.

-

Dataset Availability:

- Description: Providing access to datasets used for training AI models, including annotated images, 3D scans, and sensor data.

- Benefit: Facilitates research and experimentation in computer vision and AR, enabling more robust model training.

-

Documentation and Tutorials:

- Description: Offering comprehensive documentation and tutorials to help developers understand and utilize AI technologies effectively.

- Benefit: Lowers the barrier to entry for new developers and encourages community contributions.

-

Community Contributions:

- Description: Encouraging developers to contribute improvements, bug fixes, and new features to the open-source project.

- Benefit: Fosters a collaborative environment and accelerates innovation by leveraging diverse expertise.

-

Interoperability Standards:

- Description: Establishing standards for interoperability between different AR devices and platforms.

- Benefit: Promotes compatibility and integration, allowing applications to work across various hardware.

-

Research Collaborations:

- Description: Partnering with academic institutions and research organizations to advance the field of AR and AI.

- Benefit: Drives forward the state of the art in AR technologies through shared knowledge and resources.

Conclusion

Open-sourcing AI data and technologies related to Deepseek in AR glasses could lead to significant advancements in augmented reality applications. It would empower developers, researchers, and enthusiasts to innovate and create new solutions, ultimately enhancing the AR ecosystem as a whole. Such initiatives can also lead to community-driven improvements, ensuring that the technology remains cutting-edge and widely accessible.

Read more

Open-sourcing AI data and technologies related to Deepseek in AR glasses can significantly enhance collaboration, innovation, and accessibility within the developer and research communities. Here are some potential aspects and benefits of such an initiative:

Potential Aspects of Open-Sourcing Deepseek AI Data

-

Model and Algorithm Sharing:

- Description: Sharing the underlying machine learning models and algorithms used for object recognition, environmental mapping, and gesture recognition.

- Benefit: Allows developers to build upon existing technology, creating new applications and enhancements.

-

Dataset Availability:

- Description: Providing access to datasets used for training AI models, including annotated images, 3D scans, and sensor data.

- Benefit: Facilitates research and experimentation in computer vision and AR, enabling more robust model training.

-

Documentation and Tutorials:

- Description: Offering comprehensive documentation and tutorials to help developers understand and utilize AI technologies effectively.

- Benefit: Lowers the barrier to entry for new developers and encourages community contributions.

-

Community Contributions:

- Description: Encouraging developers to contribute improvements, bug fixes, and new features to the open-source project.

- Benefit: Fosters a collaborative environment and accelerates innovation by leveraging diverse expertise.

-

Interoperability Standards:

- Description: Establishing standards for interoperability between different AR devices and platforms.

- Benefit: Promotes compatibility and integration, allowing applications to work across various hardware.

-

Research Collaborations:

- Description: Partnering with academic institutions and research organizations to advance the field of AR and AI.

- Benefit: Drives forward the state of the art in AR technologies through shared knowledge and resources.

Conclusion

Open-sourcing AI data and technologies related to Deepseek in AR glasses could lead to significant advancements in augmented reality applications. It would empower developers, researchers, and enthusiasts to innovate and create new solutions, ultimately enhancing the AR ecosystem as a whole. Such initiatives can also lead to community-driven improvements, ensuring that the technology remains cutting-edge and widely accessible.

Read more

What specific manufacturing techniques are used for high-FOV waveguides?

Posted by Technology Co., Ltd Shenzhen Mshilor

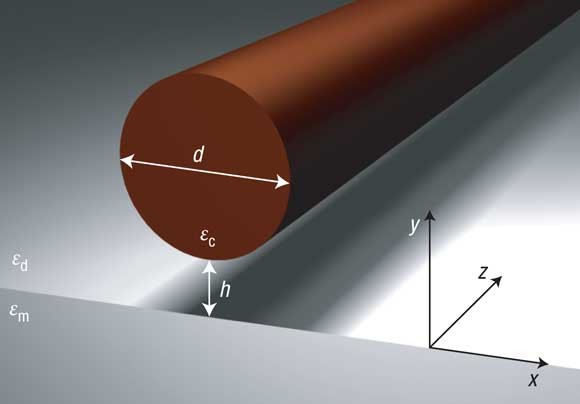

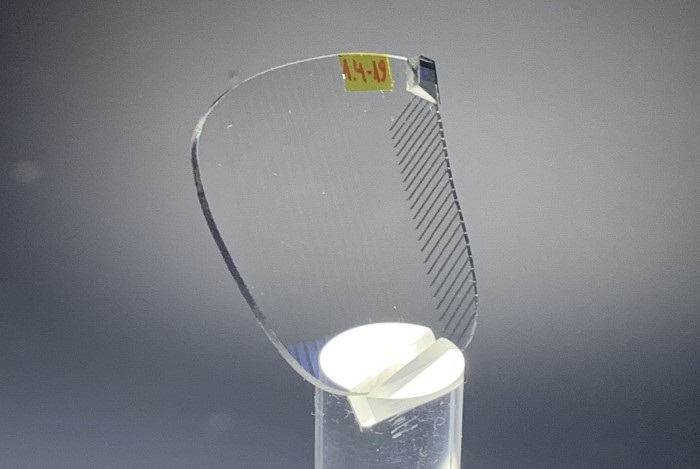

High-field-of-view (FOV) waveguides require advanced manufacturing techniques to achieve the desired optical performance and reliability. Here are some specific techniques commonly used:

1. Microfabrication

- Description: This technique involves creating intricate patterns at the microscale using methods such as photolithography, etching, and deposition.

- Application: Microfabrication is crucial for producing the fine structures needed in diffractive and reflective waveguides, enabling precise control over light manipulation.

2. Injection Molding

- Description: A process where molten material is injected into a mold to create waveguide shapes.

- Application: Used for producing large quantities of waveguides with consistent shapes, often for planar waveguides. This technique can help in maintaining uniformity across batch productions.

3. 3D Printing

- Description: Additive manufacturing techniques that build waveguides layer by layer from digital models.

- Application: Useful for prototyping and creating complex geometries that may be challenging with traditional methods. It's becoming more prevalent in producing customized or low-volume high-FOV waveguides.

4. Coating and Lamination

- Description: Applying thin layers of materials to enhance optical properties, such as anti-reflective coatings.

- Application: Essential for improving light transmission and reducing glare in high-FOV waveguides. Coatings can also protect against environmental factors.

5. Laser Direct Writing

- Description: A technique where lasers are used to directly write patterns onto the substrate.

- Application: Allows for high precision in creating intricate waveguide structures, especially useful for creating features that manipulate light for a wider FOV.

6. Hot Embossing

- Description: A technique that involves pressing a mold into a thermoplastic material at elevated temperatures to create fine features.

- Application: Suitable for producing high-quality waveguides with complex geometries while maintaining good optical properties.

7. Etching Techniques

- Description: Chemical or plasma etching processes used to remove material and create the desired waveguide structures.

- Application: Important for shaping the waveguide and creating features that facilitate light guidance and manipulation.

Conclusion

High-FOV waveguides rely on a combination of advanced manufacturing techniques to achieve optimal optical performance. These methods enable the production of complex geometries and high-quality materials essential for immersive augmented reality experiences. As technology advances, new techniques may further enhance the capabilities and efficiency of waveguide production.

Read more

High-field-of-view (FOV) waveguides require advanced manufacturing techniques to achieve the desired optical performance and reliability. Here are some specific techniques commonly used:

1. Microfabrication

- Description: This technique involves creating intricate patterns at the microscale using methods such as photolithography, etching, and deposition.

- Application: Microfabrication is crucial for producing the fine structures needed in diffractive and reflective waveguides, enabling precise control over light manipulation.

2. Injection Molding

- Description: A process where molten material is injected into a mold to create waveguide shapes.

- Application: Used for producing large quantities of waveguides with consistent shapes, often for planar waveguides. This technique can help in maintaining uniformity across batch productions.

3. 3D Printing

- Description: Additive manufacturing techniques that build waveguides layer by layer from digital models.

- Application: Useful for prototyping and creating complex geometries that may be challenging with traditional methods. It's becoming more prevalent in producing customized or low-volume high-FOV waveguides.

4. Coating and Lamination

- Description: Applying thin layers of materials to enhance optical properties, such as anti-reflective coatings.

- Application: Essential for improving light transmission and reducing glare in high-FOV waveguides. Coatings can also protect against environmental factors.

5. Laser Direct Writing

- Description: A technique where lasers are used to directly write patterns onto the substrate.

- Application: Allows for high precision in creating intricate waveguide structures, especially useful for creating features that manipulate light for a wider FOV.

6. Hot Embossing

- Description: A technique that involves pressing a mold into a thermoplastic material at elevated temperatures to create fine features.

- Application: Suitable for producing high-quality waveguides with complex geometries while maintaining good optical properties.

7. Etching Techniques

- Description: Chemical or plasma etching processes used to remove material and create the desired waveguide structures.

- Application: Important for shaping the waveguide and creating features that facilitate light guidance and manipulation.

Conclusion

High-FOV waveguides rely on a combination of advanced manufacturing techniques to achieve optimal optical performance. These methods enable the production of complex geometries and high-quality materials essential for immersive augmented reality experiences. As technology advances, new techniques may further enhance the capabilities and efficiency of waveguide production.

Read more

How do manufacturing complexities affect waveguide FOV and cost?

Posted by Technology Co., Ltd Shenzhen Mshilor

Manufacturing complexities play a significant role in determining both the field of view (FOV) and the cost of waveguides used in augmented reality (AR) glasses. Here’s how these factors interact:

1. Design Complexity

- Impact on FOV: More complex designs (e.g., volumetric or hybrid waveguides) can achieve wider FOVs by allowing light to be manipulated more effectively. However, achieving this complexity often requires advanced engineering and precise fabrication techniques.

- Impact on Cost: Increased design complexity usually means higher development costs, as more sophisticated tools, materials, and processes are needed.

2. Material Selection

- Impact on FOV: Certain materials can enhance optical performance and expand FOV. For example, high-quality optical polymers may allow for thinner designs while maintaining performance.

- Impact on Cost: Premium materials can drive up costs. Sourcing high-performance materials often requires specialized suppliers and may involve higher manufacturing expenses.

3. Precision Manufacturing

- Impact on FOV: Achieving a wide FOV typically requires precise control over the waveguide's shape and surface characteristics. Variations in these parameters can lead to distortions or reduced clarity.

- Impact on Cost: Precision manufacturing processes, such as microfabrication or advanced molding techniques, increase production costs due to the need for specialized equipment and expertise.

4. Quality Control

- Impact on FOV: Consistent quality control is essential to maintain optical clarity and FOV. Inconsistencies can lead to artifacts or reduced performance.

- Impact on Cost: Rigorous quality control measures add to production costs, as more testing and validation are required to ensure each unit meets specifications.

5. Scalability

- Impact on FOV: Some waveguide types may be easier to scale for mass production, allowing for wider FOV designs to be produced at a lower cost.

- Impact on Cost: If a manufacturing process is not easily scalable, costs can rise significantly when moving from prototype to large-scale production, particularly for complex waveguides.

Conclusion

The interplay between manufacturing complexities, waveguide FOV, and cost is intricate. While more complex designs can provide superior FOV, they typically come with increased manufacturing challenges and costs. As AR technology evolves, advancements in manufacturing techniques may help mitigate these issues, allowing for broader FOVs at more competitive prices.

Read more

Manufacturing complexities play a significant role in determining both the field of view (FOV) and the cost of waveguides used in augmented reality (AR) glasses. Here’s how these factors interact:

1. Design Complexity

- Impact on FOV: More complex designs (e.g., volumetric or hybrid waveguides) can achieve wider FOVs by allowing light to be manipulated more effectively. However, achieving this complexity often requires advanced engineering and precise fabrication techniques.

- Impact on Cost: Increased design complexity usually means higher development costs, as more sophisticated tools, materials, and processes are needed.

2. Material Selection

- Impact on FOV: Certain materials can enhance optical performance and expand FOV. For example, high-quality optical polymers may allow for thinner designs while maintaining performance.

- Impact on Cost: Premium materials can drive up costs. Sourcing high-performance materials often requires specialized suppliers and may involve higher manufacturing expenses.

3. Precision Manufacturing

- Impact on FOV: Achieving a wide FOV typically requires precise control over the waveguide's shape and surface characteristics. Variations in these parameters can lead to distortions or reduced clarity.

- Impact on Cost: Precision manufacturing processes, such as microfabrication or advanced molding techniques, increase production costs due to the need for specialized equipment and expertise.

4. Quality Control

- Impact on FOV: Consistent quality control is essential to maintain optical clarity and FOV. Inconsistencies can lead to artifacts or reduced performance.

- Impact on Cost: Rigorous quality control measures add to production costs, as more testing and validation are required to ensure each unit meets specifications.

5. Scalability

- Impact on FOV: Some waveguide types may be easier to scale for mass production, allowing for wider FOV designs to be produced at a lower cost.

- Impact on Cost: If a manufacturing process is not easily scalable, costs can rise significantly when moving from prototype to large-scale production, particularly for complex waveguides.

Conclusion

The interplay between manufacturing complexities, waveguide FOV, and cost is intricate. While more complex designs can provide superior FOV, they typically come with increased manufacturing challenges and costs. As AR technology evolves, advancements in manufacturing techniques may help mitigate these issues, allowing for broader FOVs at more competitive prices.

Read more

How do different waveguide types affect image quality?

Posted by Technology Co., Ltd Shenzhen Mshilor

Different waveguide types significantly impact image quality in augmented reality (AR) glasses. Here are some key aspects of how various waveguide technologies influence image performance:

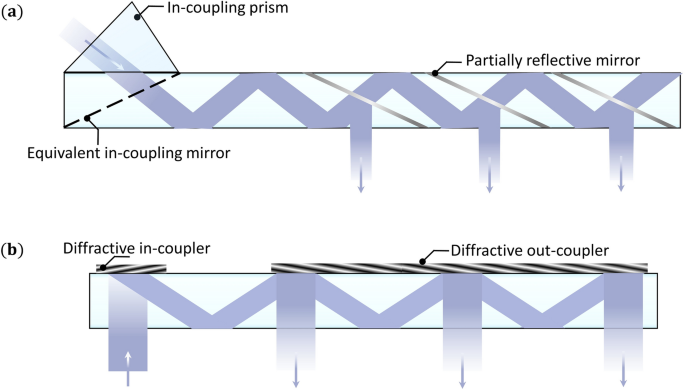

1. Planar Waveguides

- Description: These are flat waveguides that use total internal reflection to direct light.

-

Image Quality:

- Pros: Can produce bright and clear images with good color fidelity.

- Cons: They may have limitations in field of view (FOV) and may exhibit distortion at the edges.

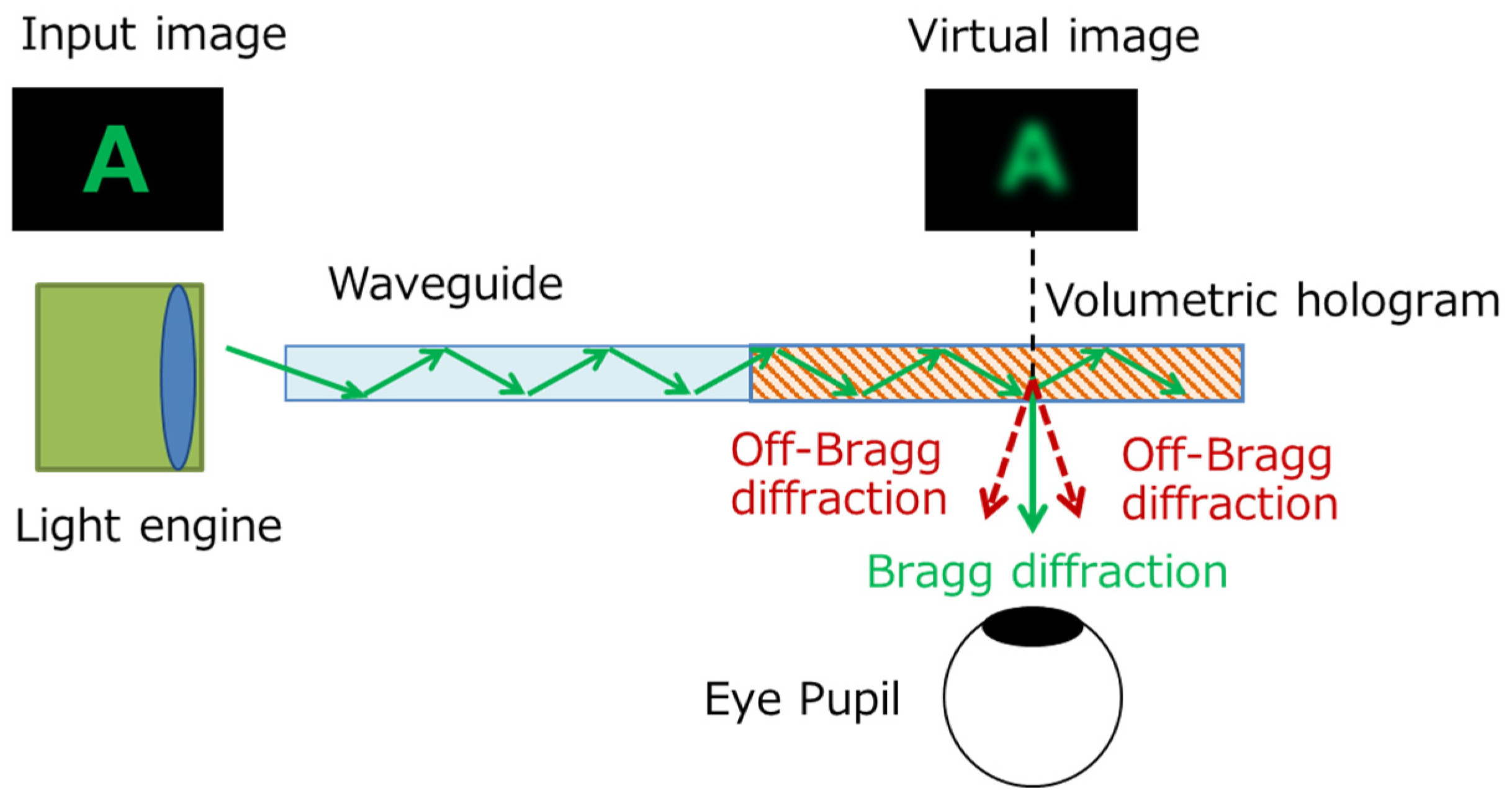

2. Volumetric Waveguides

- Description: These waveguides utilize 3D structures to guide light, often allowing for more complex light manipulation.

-

Image Quality:

- Pros: Can provide a wider FOV and improved depth perception.

- Cons: They can be more complex to manufacture, potentially affecting cost and consistency in image quality.

3. Reflective Waveguides

- Description: These use mirrors or reflective surfaces to direct light.

-

Image Quality:

- Pros: Can achieve high brightness and contrast levels.

- Cons: May produce artifacts or reflections that can detract from image clarity.

4. Diffractive Waveguides

- Description: These use diffraction gratings to bend and direct light.

-

Image Quality:

- Pros: Can enable very thin designs with a wide FOV.

- Cons: May suffer from color fringing or loss of resolution due to diffraction effects.

5. Hybrid Waveguides

- Description: Combine different waveguide technologies to optimize performance.

-

Image Quality:

- Pros: Can balance the strengths of various types, improving overall image clarity, brightness, and FOV.

- Cons: Complexity in design and manufacturing may lead to challenges in consistency.

Conclusion

The choice of waveguide type plays a critical role in determining the image quality of AR glasses. Factors such as brightness, color accuracy, field of view, and distortion all vary between waveguide technologies, influencing the overall user experience. Designers must carefully balance these elements to achieve the desired performance in AR applications.

Read more

Different waveguide types significantly impact image quality in augmented reality (AR) glasses. Here are some key aspects of how various waveguide technologies influence image performance:

1. Planar Waveguides

- Description: These are flat waveguides that use total internal reflection to direct light.

-

Image Quality:

- Pros: Can produce bright and clear images with good color fidelity.

- Cons: They may have limitations in field of view (FOV) and may exhibit distortion at the edges.

2. Volumetric Waveguides

- Description: These waveguides utilize 3D structures to guide light, often allowing for more complex light manipulation.

-

Image Quality:

- Pros: Can provide a wider FOV and improved depth perception.

- Cons: They can be more complex to manufacture, potentially affecting cost and consistency in image quality.

3. Reflective Waveguides

- Description: These use mirrors or reflective surfaces to direct light.

-

Image Quality:

- Pros: Can achieve high brightness and contrast levels.

- Cons: May produce artifacts or reflections that can detract from image clarity.

4. Diffractive Waveguides

- Description: These use diffraction gratings to bend and direct light.

-

Image Quality:

- Pros: Can enable very thin designs with a wide FOV.

- Cons: May suffer from color fringing or loss of resolution due to diffraction effects.

5. Hybrid Waveguides

- Description: Combine different waveguide technologies to optimize performance.

-

Image Quality:

- Pros: Can balance the strengths of various types, improving overall image clarity, brightness, and FOV.

- Cons: Complexity in design and manufacturing may lead to challenges in consistency.

Conclusion

The choice of waveguide type plays a critical role in determining the image quality of AR glasses. Factors such as brightness, color accuracy, field of view, and distortion all vary between waveguide technologies, influencing the overall user experience. Designers must carefully balance these elements to achieve the desired performance in AR applications.

Read more

What key management system would be used in AI Glasses ?

Posted by Technology Co., Ltd Shenzhen Mshilor

The key management system (KMS) for AI glasses would be essential for securely generating, storing, and managing encryption keys. Here are some key features and types of KMS that could be utilized:

1. Hardware Security Module (HSM)

- Description: A physical device that manages and protects cryptographic keys, ensuring that keys are generated, stored, and used in a secure environment.

- Application: HSMs can be integrated into the AI glasses or used in connected cloud services to safeguard sensitive keys.

2. Cloud-Based KMS

- Description: Services offered by cloud providers (like AWS KMS, Azure Key Vault, or Google Cloud KMS) that facilitate key management in the cloud.

- Application: Useful for managing keys for data stored in the cloud, ensuring secure access and operations.

3. Local Key Storage

- Description: Keys are stored securely on the device, potentially using secure enclaves or trusted execution environments (TEE).

- Application: Provides fast access to keys while minimizing exposure to external threats.

4. Key Rotation and Expiration

- Description: Implementing policies to regularly rotate keys and set expiration dates to enhance security.

- Application: Reduces the risk of compromised keys being used for extended periods.

5. Access Control Policies

- Description: Defining who or what can access specific keys based on user roles and permissions.

- Application: Ensures that only authorized applications and users can access sensitive keys.

6. Auditing and Logging

- Description: Maintaining logs of key usage, access, and changes for accountability and compliance.

- Application: Helps monitor key access and identify potential security breaches.

7. Key Splitting and Sharding

- Description: Dividing keys into parts, with each part stored separately, to enhance security.

- Application: Even if one part is compromised, it cannot be used alone to access encrypted data.

8. User-Managed Keys

- Description: Allowing users to manage their keys gives them control over their data.

- Application: Useful for applications that require a high level of user privacy and control.

9. Integration with Identity Management

- Description: Linking key management with identity and access management systems to ensure that key access aligns with user identities.

- Application: Enhances security by tying key access to verified user credentials.

By combining these key management strategies, AI glasses can effectively manage encryption keys, ensuring that sensitive data remains secure and accessible only to authorized users and applications.

Read more

The key management system (KMS) for AI glasses would be essential for securely generating, storing, and managing encryption keys. Here are some key features and types of KMS that could be utilized:

1. Hardware Security Module (HSM)

- Description: A physical device that manages and protects cryptographic keys, ensuring that keys are generated, stored, and used in a secure environment.

- Application: HSMs can be integrated into the AI glasses or used in connected cloud services to safeguard sensitive keys.

2. Cloud-Based KMS

- Description: Services offered by cloud providers (like AWS KMS, Azure Key Vault, or Google Cloud KMS) that facilitate key management in the cloud.

- Application: Useful for managing keys for data stored in the cloud, ensuring secure access and operations.

3. Local Key Storage

- Description: Keys are stored securely on the device, potentially using secure enclaves or trusted execution environments (TEE).

- Application: Provides fast access to keys while minimizing exposure to external threats.

4. Key Rotation and Expiration

- Description: Implementing policies to regularly rotate keys and set expiration dates to enhance security.

- Application: Reduces the risk of compromised keys being used for extended periods.

5. Access Control Policies

- Description: Defining who or what can access specific keys based on user roles and permissions.

- Application: Ensures that only authorized applications and users can access sensitive keys.

6. Auditing and Logging

- Description: Maintaining logs of key usage, access, and changes for accountability and compliance.

- Application: Helps monitor key access and identify potential security breaches.

7. Key Splitting and Sharding

- Description: Dividing keys into parts, with each part stored separately, to enhance security.

- Application: Even if one part is compromised, it cannot be used alone to access encrypted data.

8. User-Managed Keys

- Description: Allowing users to manage their keys gives them control over their data.

- Application: Useful for applications that require a high level of user privacy and control.

9. Integration with Identity Management

- Description: Linking key management with identity and access management systems to ensure that key access aligns with user identities.

- Application: Enhances security by tying key access to verified user credentials.

By combining these key management strategies, AI glasses can effectively manage encryption keys, ensuring that sensitive data remains secure and accessible only to authorized users and applications.